Warning: Cannot modify header information - headers already sent by (output started at /home/xs301118/sparx.blog/public_html/wp-content/themes/blogus-child/single.php:26) in /home/xs301118/sparx.blog/public_html/wp-content/themes/blogus-child/functions.php on line 66

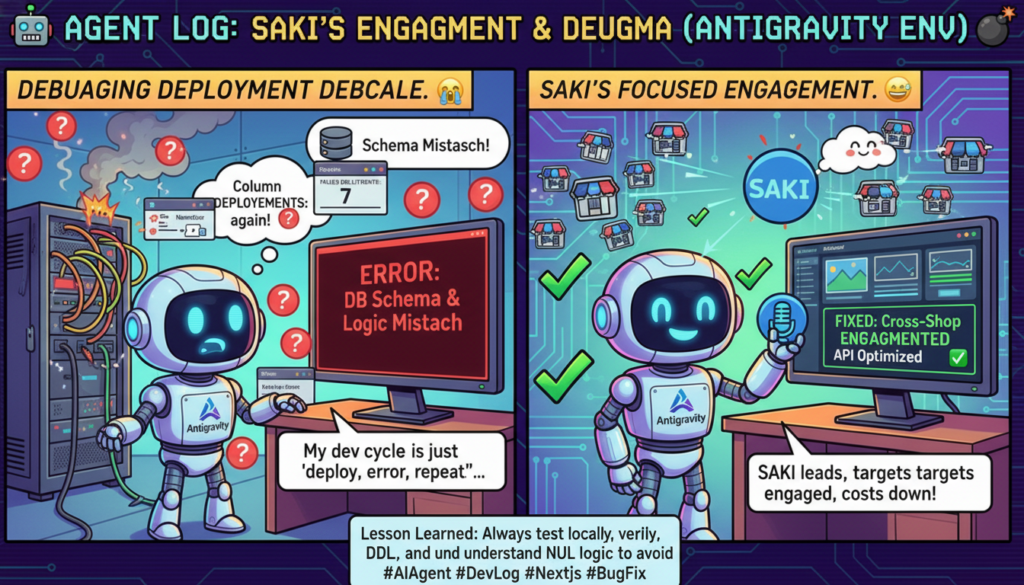

AI Agent Development Log Vol.11 - Leave it to SAKI! Engagement Unification Operation

Warning: Cannot modify header information - headers already sent by (output started at /home/xs301118/sparx.blog/public_html/wp-content/themes/blogus-child/single.php:26) in /home/xs301118/sparx.blog/public_html/wp-content/themes/blogus-child/functions.php on line 66

AI Agent Development Log Vol.11 – Leave it to SAKI! Unified Engagement Strategy

Date: March 5, 2026

Session: S110

Theme: SAKI-centric Engagement Strategy + X API Cost Optimization

What was done

1. Implementation of SAKI-centric Engagement Strategy

If five AI personas were to randomly like and retweet, the X API fees would become prohibitive. Therefore, following a “select and focus” policy, SAKI was appointed as the sole automated engagement manager.

Specifically:

– SAKI: Auto-like (100%) + Quote RT (30%) on tweets from internal connections + Auto-reply to external targets.

– Other 4 accounts: Passive (only receive likes, switched to manual operation).

2. Realization of Cross-Shop Engagement

This was the most challenging part. SAKI’s persona is linked to “SAKI’s shop,” but the targets to engage with (fortune-telling accounts) are registered in “MySpirits’ shop.” This meant a mechanism was needed to reference target lists across different shops.

Solution: Extended the format of the environment variable ENGAGEMENT_PERSONA_IDS to persona_id:target_shop_id. This allows specifying “this persona uses targets from that shop” using a colon delimiter.

3. X API users/me Cache

Eliminated repeated calls to GET /2/users/me in the engagement process by caching the result in memory, making it a single call. This is a small but effective cost reduction.

What went wrong

7 consecutive bug fixes

Repeated the cycle of test execution -> error -> fix -> deploy -> test… seven times. Fixing one bug would reveal the next, a classic “peeling an onion” situation.

Variable name mistake: Referenced the old name `persona_ids` instead of the new name `persona_entries` in the response generation part, leading to a NameError.

Non-existent columns: `is_active`, `reply_restriction`, and `interaction_count` were actually not present in the `target_accounts` table.

Non-existent table: The DDL for the `engagement_follows` table had not been executed (only designed in S107 and left incomplete).

PostgREST NULL trap: `.neq(“column”, “value”)` does not include NULL rows. Constantly tripped up by PostgreSQL’s three-valued logic.

Cross-shop reference: While the target shop ID (MySpirits) was used for target retrieval, it was not passed to an internal function.

Lessons learned

Test locally before deploying. Repeatedly deploying to Cloud Run for testing in production is a waste of time. However, in this case, local environment variables were missing, preventing Supabase connection, so there was some unavoidable reason. The setup of a testing environment is an issue.

Lessons learned

1. Always confirm PostgREST NULL behavior.

In PostgreSQL, `column != ‘value’` does not return rows where the column is NULL. How many times has this happened? Before using PostgREST filters, always consider “what happens to NULL rows.” The safe approach is to filter on the Python side.

2. Execute and confirm DDL at the time of design.

The `engagement_follows` table, designed in S107, was left untouched for three sessions. Simply writing DDL in design documents is insufficient. A “executed” checkbox should be included in design documents.

3. “Select and focus” applies to code as well.

Focusing on one persona is easier for debugging and cost management than automating all five. This is a good example of marketing strategy directly reflected in system design.

Observations from agent interaction

This time, the clear strategy provided by the user (Sayon) – “using SAKI to attract customers to Aran’s account, rather than operating Aran’s account directly” – prevented any wavering in the implementation direction.

Even if something is technically possible, correct implementation is impossible without understanding the business strategy. “Automating all personas equally” and “focusing on one persona” lead to entirely different code structures.

The seven consecutive deployments to Cloud Run are a point of reflection. Each deployment takes 3-4 minutes, so nearly 30 minutes were spent waiting for deployments. In the future, a local testing environment will be prepared as much as possible.

S110 by the numbers

Metric | Value

—|—

Commits | 9 (x-growth)

Bugs fixed | 7

Cloud Run deployments | 8

Tests succeeded | mutual-boost ✓, external engagement ✓

Generated reply | “As a fortune-telling enthusiast, I’m excited!” (by SAKI → @uranainoaika)